Your AI Strategy Is Only As Strong As Your Workforce

"Generative AI alone could add up to $4.4 trillion annually to the global economy, but only if organizations can close the capability gap."*

Artificial intelligence has moved beyond experimentation. Across industries, organizations are embedding AI into products, workflows, and decision-making systems. Yet while investment in AI technologies is accelerating, enterprise readiness is not keeping pace.

Many organizations are discovering that the real challenge of AI is not building models. It is operating them responsibly, securely, and at scale.

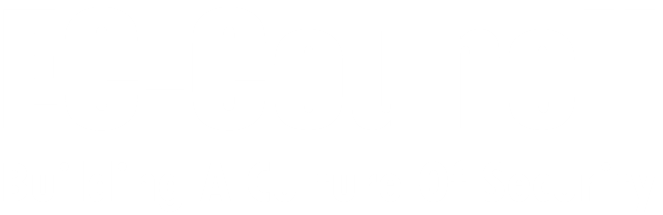

Key indicators highlight the scale of the gap: 88% of organizations have adopted AI in at least one business function (McKinsey State of AI, 2025). $5.5 trillion in projected economic loss is linked to IT and AI skills shortages (IDC, 2024). 39% of current job skills are expected to change by 2030 (World Economic Forum Future of Jobs Report, 2025). And workers with AI skills command a 56% wage premium over peers in similar roles without AI skills, double the premium seen just a year prior (PwC Global AI Jobs Barometer, 2025).

Why AI Initiatives Stall Without Workforce Readiness

AI initiatives often begin with promising prototypes. But moving from experimentation to operational systems introduces entirely new challenges. Enterprise AI systems interact with sensitive data, connect to internal systems, and operate within regulatory and governance environments. Without the right people in place, organizations face compounding risks.

No program leadership to scale AI beyond pilots

Security teams unprepared for AI-specific threats

Governance frameworks that stay theoretical

Unclear ownership across product, security, legal & risk

Workforce skill gaps slowing adoption across business units

AI deployed without compliance or audit readiness

What Happens Without AI-Ready Teams

These are not hypothetical risks. Several high-profile incidents have already demonstrated what happens when AI systems are deployed without adequate security, governance, or workforce capability.

Moltbot Breach

1,000+ unsecured AI assistant instances exposed to the internet with open admin ports and no authentication, enabling remote code execution.

Source: GitHub Security Research

DeepSeek Data Exposure

Sensitive database exposed publicly due to misconfiguration. Included chat logs, API keys, and internal metadata.

Source: Wiz Research

Samsung ChatGPT Leak

Engineers accidentally shared proprietary code via ChatGPT. Samsung banned generative AI use internally afterward.

Source: TechCrunch

Air Canada Chatbot Ruling

Chatbot gave false bereavement fare info; airline held legally liable.

Source: The Guardian

Microsoft Copilot Data Leakage

Researchers demonstrated prompt injection attacks could exfiltrate sensitive data from Microsoft 365 Copilot.

Source: Black Hat USA 2023 research

Chevrolet Dealership Exploit

Chatbot prompt-injected to agree to sell a Chevrolet Tahoe for $1. Highlighted LLM prompt-injection vulnerabilities.

Source: Cyber News

Italy Bans ChatGPT

Temporarily blocked ChatGPT over GDPR and age verification issues.

Source: BBC

Microsoft Tay

Chatbot hijacked by users to produce racist and offensive content within 16 hours.

Source: The Guardian

Each of these incidents points to the same conclusion: organizations that deploy AI without the workforce capability to secure and govern it are exposing themselves to financial, legal, and reputational risk.

EC-Council's ADG Framework For Enterprise AI Workforce Development

The ADG Framework is a structured model for building enterprise AI capability across the full lifecycle of AI systems. It organizes workforce readiness around three core responsibilities, ensuring organizations can move from experimentation to sustainable, secure deployment.

AI governance and security frameworks.

Adopt

Implement AI solutions and scale them from experimentation to enterprise deployment.

- AI literacy across teams

- Program management & delivery

- Business-AI strategy alignment

- Cross-functional AI leadership

C|AIPM — Certified AI Program Manager

Defend

Secure AI systems against emerging threats that traditional cybersecurity doesn’t cover.

- AI penetration testing & red teaming

- Prompt injection testing

- Model exploitation & adversarial ML

- Securing AI pipelines

C|OASP — Certified Offensive AI Security Professional

Govern

Ensure AI systems operate responsibly and in compliance with regulatory expectations.

- AI risk assessment

- Responsible AI policies

- Regulatory compliance

- AI decision-making oversight

C|RAGE — Certified Responsible AI Governance & Ethics

Together, these three capabilities enable organizations to move from AI experimentation to sustainable enterprise deployment, with the right teams in place at every stage.

Artificial Intelligence Essentials (AI|E)

Preparing The Enterprise Workforce For The AI Era

The organizations that succeed will not be defined by the sophistication of their models alone. They will be defined by their ability to build teams capable of implementing, securing, and governing AI systems responsibly. Through the ADG Framework and Enterprise AI Credential Suite, EC-Council provides a structured pathway for enterprises to build these capabilities across their workforce.

"By developing professionals who can Adopt, Defend, and Govern AI, organizations can move beyond experimentation and build AI systems that deliver sustainable value."

Additionally, we offer AI|E to equip teams with foundational AI literacy and responsible AI usage skills. Learn More.

Build Your AI-Ready Workforce Today

Get your team certified with EC-Council’s Enterprise AI Credential Suite.

- Enterprise-grade, role-based certifications

- Reduce AI risk across security, legal, and compliance

- Upskill teams to Adopt, Defend, and Govern AI at scale

Program Inquiry

© 2026 EC-Council & Forma Education. All rights reserved.

- Privacy

- Terms

- Contact